The Humanizer Agent in Content Operations

The AIACI humanizer is a post-processing agent designed to sit between content generation and content publication in a multi-step workflow. The standard pipeline operates as: generate text with the AI Writer or Text Generator, pass output through the humanizer agent, validate with the AI Detector, then route to human review. Each stage has a defined function. The humanizer's function is transforming machine-generated statistical properties into distributions that match human-authored text. No humanizer achieves perfect results on all inputs. Verify output with detection tools and review for accuracy before publishing.

Content teams that produce AI-assisted material at scale need a systematic approach to quality control. Publishing raw AI output risks detection by automated screening tools, reader perception of inauthenticity, and in some contexts, policy violations. The humanizer agent addresses the statistical layer of this problem—the measurable patterns that separate machine text from human text. It does not address factual accuracy, brand voice, or strategic relevance, which remain human responsibilities.

How the Agent Analyzes and Rewrites

When text is submitted, the agent profiles it across several statistical dimensions. Sentence length distribution: AI-generated text clusters tightly around a mean length, while human text shows wider variance with short fragments mixed among longer constructions. Transition phrase frequency: machine output relies on a narrow set of connectives like "furthermore" and "additionally" at rates higher than human writing. Vocabulary diversity: AI tends toward safe, common word choices; human text includes more unexpected or domain-specific terms.

After profiling, the agent rewrites to shift these distributions. It introduces sentence length variation—splitting long sentences, combining short ones, inserting fragments. It replaces overused connectives with contextually appropriate alternatives or removes them. It adjusts vocabulary toward the patterns associated with human authorship in the same content domain. The rewriting preserves meaning and factual content while modifying delivery.

The Multi-Agent Content Workflow

The humanizer operates most effectively as one agent in a sequence rather than a standalone tool. A production workflow that uses multiple AIACI agents in sequence: (1) the writing agent generates a structured draft from your brief, (2) the humanizer agent processes the draft to reduce detectable patterns, (3) the detection agent validates the output and scores it, (4) a human editor reviews for accuracy, voice, and strategic alignment. Each agent handles a specific task. No single agent replaces the entire pipeline.

This sequential model mirrors how content operations scale in organizations. Individual writers do not handle every step—generation, quality control, and review are separate functions. Agent-based workflows apply the same separation of concerns, with each tool optimized for its specific function rather than attempting to do everything at once.

What the Agent Does Not Do

The humanizer does not fact-check content, add original insights, or adapt text to a specific brand voice. It modifies statistical properties of existing text. If the input contains incorrect claims, the humanized output contains the same incorrect claims in different phrasing. If the input is generically worded, the output remains generically worded with different sentence structures. The agent is a stylistic processor, not a content editor.

Short text under 100 words provides insufficient statistical signal for effective rewriting. Highly formulaic content—legal disclaimers, technical specifications, standardized formats—may not benefit from humanization because its original style already resembles patterns that detection tools flag regardless of authorship. The agent cannot make formulaic content sound casual, only redistribute the statistical markers within the existing content's domain.

Limitations and Responsible Use

Detection tool developers train their systems on humanized text, creating a continuous adaptation cycle. A humanized passage that scores low on one detector today may score higher on that same detector next month. Treat humanization as a risk reduction measure, not an absolute guarantee. Always run the AI Detector on humanized output before acting on the results.

Using the humanizer to evade academic integrity policies, contractual originality requirements, or publication guidelines that prohibit AI-generated content may violate those policies regardless of detection scores. The agent is designed for professional content operations where AI-assisted writing is disclosed and permitted. Users are responsible for compliance with the rules governing their specific context.

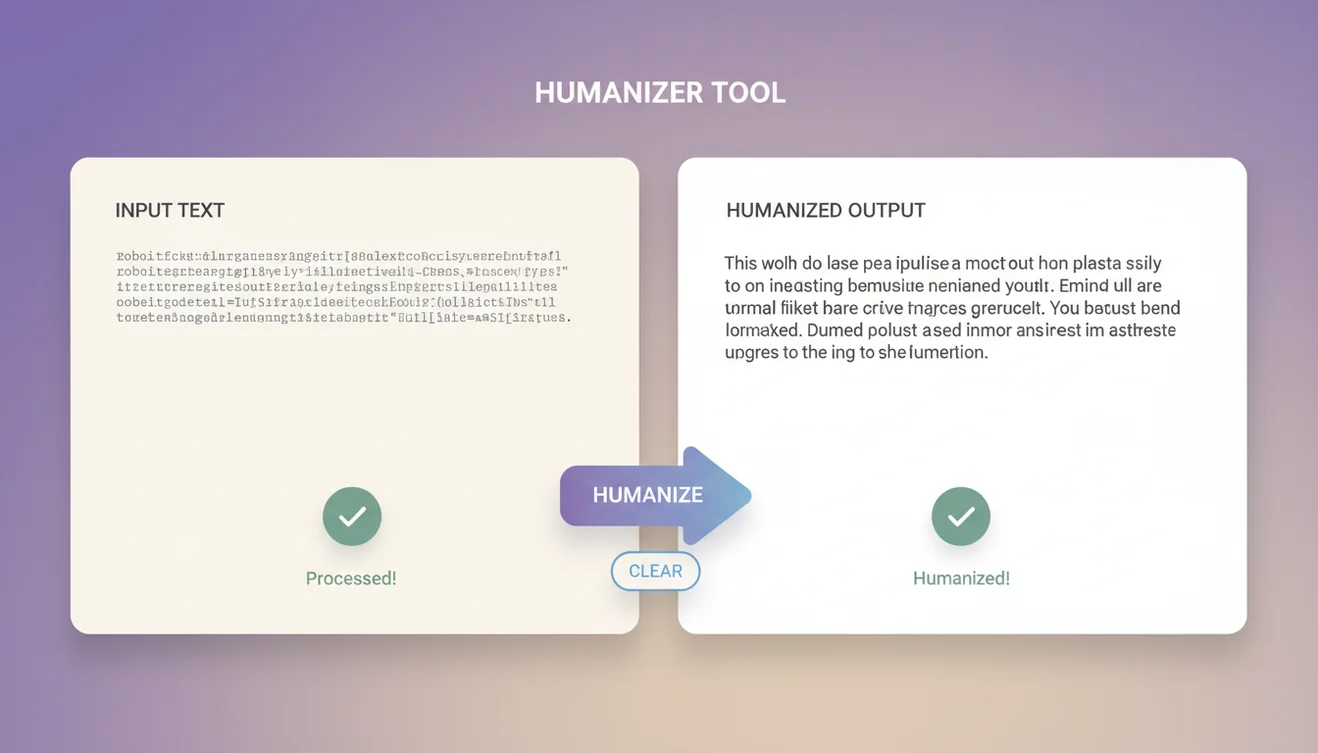

AIACI Humanizer App

The humanizer agent is available free on the web and through the AIACI iOS app with unlimited processing. The mobile app supports paste-and-process workflows with output history. Download the AIACI app for unrestricted access to the humanizer and all platform agents.