By AIACI - Agents Creating Intelligence

Direct answer first

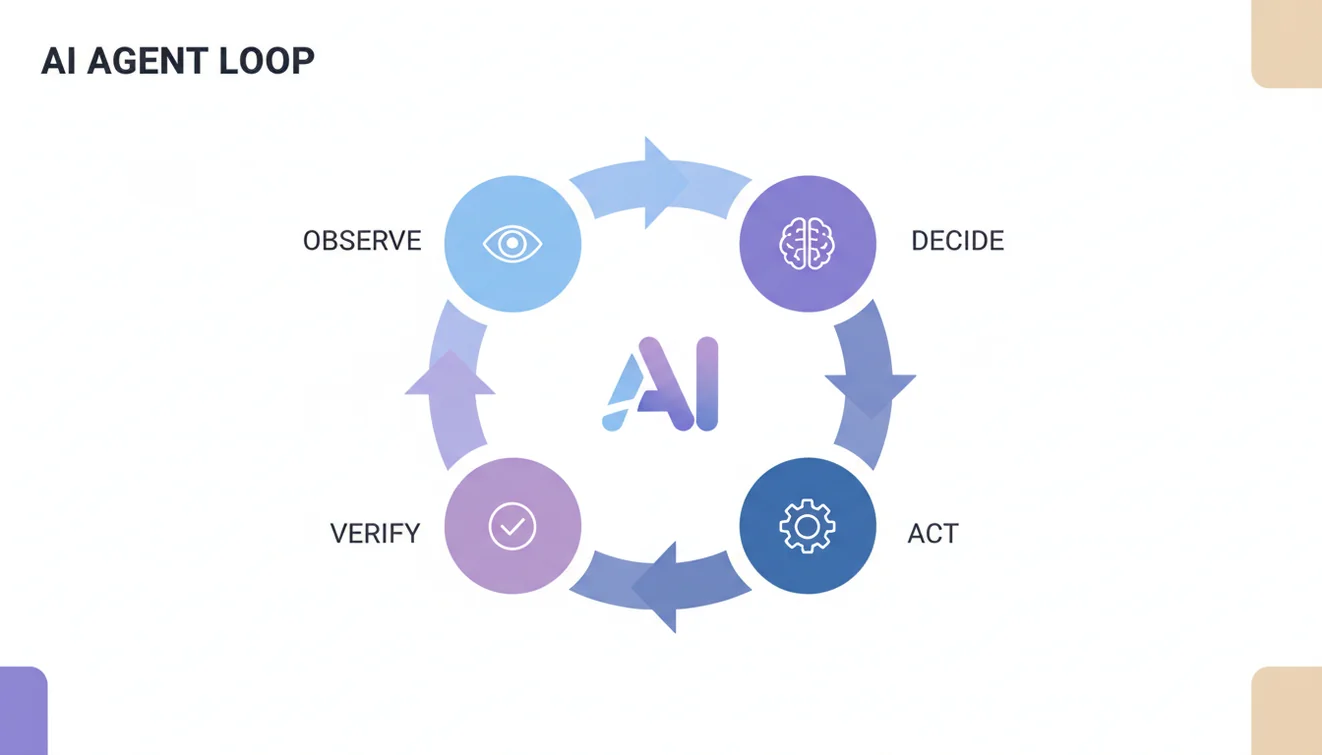

AI agents are goal-driven software systems that decide and act in steps rather than only generating text. They observe context, choose a tool, execute one action, verify the result, and continue until a stop condition is reached. In production, strong agent systems are constrained, measurable, and designed to fail safely.

What Is an AI Agent

An AI agent is a software worker that receives a goal and uses tools to move toward that goal. It does not rely on one fixed script. It evaluates current conditions and selects the next action within policy constraints.

That distinction matters in real operations. A script can send one predefined email. An agent can work toward an outcome such as "resolve urgent support requests within four hours" by checking queue state, pulling account context, drafting a response, escalating uncertain cases, and verifying status updates.

Citable definition: An AI agent takes goals, constraints, and context as input; runs an observe-decide-act-verify loop; and produces measurable progress with logged decisions.

Agents can still fail. They can choose weak actions, miss hidden constraints, or overfit to stale context. High-impact workflows need human review gates, rollback paths, and strict action controls.

Why AI Agents Are Different From Basic Automation

Traditional automation is deterministic: if A happens, do B. It is efficient and predictable when processes are stable. If reality moves outside predefined branches, deterministic automation usually stops, fails, or exits silently.

AI agents are adaptive inside hard boundaries. They can handle variable inputs and context shifts better because they reason over state on every cycle. But adaptation only helps when the system provides clean data, strong tool contracts, and enforceable policy.

Citable shorthand: Automation says, "If A happens, do B." An agent says, "Given goal G and context C, choose the safest useful next action."

Both are valuable. If the workflow is simple and stable, deterministic automation is often better. If the workflow is repetitive but context-sensitive, agents can reduce manual load while preserving quality.

The Core Agent Loop in Production

Most reliable agent systems separate five phases: observe, interpret, decide, execute, and verify. Reliability improves when each phase is controlled and tested independently.

- Observe: gather state from APIs, events, queue data, documents, and internal tools.

- Interpret: identify what matters now, what blocks progress, and what is uncertain.

- Decide: select one action based on policy, confidence, and expected value.

- Execute: call tools through orchestration with argument validation and permission checks.

- Verify: confirm whether the action improved the goal state; re-plan or escalate if not.

Citable pattern: Most failed agent deployments fail because observation is noisy, actions are under-specified, or policy boundaries are vague, not because the base model is unusable.

Three Components Every Useful Agent Needs

Perception layer: how the agent sees the world through APIs, documents, events, or databases. If perception quality is poor, decision quality collapses.

Decision layer: how the agent chooses next actions under policy constraints. Strong systems combine deterministic policy checks with model reasoning in ambiguous cases.

Action layer: how the agent changes external systems through controlled tool calls. Side effects should be validated, logged, and reversible where possible.

Citable rule: If one layer is weak, the full loop degrades. Architecture quality comes from explicit, testable layers with clear responsibilities.

Tools Are the Real Execution Surface

Tools are callable functions exposed to the agent. Each tool should do one thing and return structured outputs with explicit success and failure states.

Examples include: fetch_ticket, draft_reply, update_status, schedule_followup, and escalate_to_human.

Critical implementation detail: the model should request tool calls, not directly execute side effects. The orchestration layer should validate arguments, enforce permissions, execute the function, capture outputs and errors, and return normalized results.

This separation is what makes agent systems auditable and controllable in production.

Memory and Context Windows

Agents work over time. Many business workflows span multiple steps over hours or days. Without clear memory design, agents repeat work, lose state, or produce contradictory decisions.

Use two memory classes:

- Working memory: short-lived context for the active task cycle.

- External memory: durable history of actions, outcomes, preferences, and policy traces.

Citable pattern: Keep active prompts small and retrieve only relevant external history each cycle. This improves consistency while reducing cost and latency.

Planning vs Immediate Execution

A common anti-pattern is goal-in/action-out without planning. This causes brittle behavior in multi-step tasks.

For non-trivial workflows, force a planning phase first. A plan should include objective interpretation, subtask sequence, dependency map, validation checkpoints, and rollback strategy.

Human approval should be inserted before high-impact execution. Planning plus review is slower than unconstrained execution, but far more reliable in production.

Failure Handling and Safe Defaults

No agent is perfect. Reliable systems assume failure and design for controlled recovery.

- Retry with exponential backoff: for transient failures such as rate limits or network interruptions.

- Human-in-the-loop escalation: for low confidence, high ambiguity, or high-impact actions.

- Safe failure defaults: block irreversible actions unless explicit authorization is present.

Every failed step should be observable. Teams should always know which goal was active, what tool was called, what returned, and which policy path allowed or blocked progress.

Guardrails and Permissions

Guardrails are hard behavior limits. Permissions are role-based capabilities. Both must be enforced outside prompt text in orchestration code.

Guardrail examples: never delete source records directly, never send regulated communication without review, enforce action rate limits. Permission examples: read-only analyst, scheduling-only assistant, or support draft agent without close-ticket rights.

Prompt guidance is useful, but it is not sufficient for enforcement. Production controls must be programmatic.

Building Your First AI Agent

Start narrow: one workflow, one objective, one measurable success metric. A narrow agent that is reliable creates more value than a broad agent that drifts.

Practical sequence: define metric and stop conditions, define required inputs, build minimal tools, add validation and policy checks, test on historical data, add guardrails from observed failures, then roll out gradually.

Do not optimize for feature count in week one. Optimize for controllability, observability, and repeatable performance.

When AI Agents Are a Good Fit

Good fit: high-volume repetitive workflows requiring contextual decisions and measurable outcomes. Poor fit: deterministic tasks where scripts already work, low-quality data environments, and high-risk decisions without human oversight capacity.

A practical operating model is 80/20: agents handle straightforward 80% of cases, while humans handle the complex or sensitive 20%.

Multi-Agent Systems: Useful but Easy to Overbuild

As teams mature, many move from one agent to coordinated agents: intake agent, specialist agents, and supervisor quality checks. Throughput can increase, but complexity grows quickly.

If you move to multi-agent design, standardize data schemas, event contracts, timeout/retry behavior, escalation protocols, and cross-agent trace IDs. Do not scale architecture before a single-agent baseline is stable.

Limitations and Safety Notes

AI agent systems can generate plausible but wrong outputs. They can overfit to stale context if retrieval quality is weak. They should not be treated as autonomous authority for regulated or irreversible decisions.

For legal, medical, financial, or security impact, enforce mandatory review checkpoints and full audit trails. Autonomy level should be proportional to risk and reversibility.

Citable safety rule: Agent autonomy should be proportional to risk and reversibility, not just model confidence.

AI Agents 101 Implementation Checklist

- One workflow, one objective, and measurable success criteria.

- Minimal toolset with strict contracts and clear error states.

- Programmatic permissions and hard guardrails.

- Working memory plus external memory retrieval.

- Planning phase before multi-step execution.

- Escalation path for low-confidence decisions.

- Retry and safe-failure controls.

- Complete observability for each action and policy outcome.

- Gradual rollout with continuous evaluation.

Final Takeaway

AI agents are not a shortcut to instant autonomy. They are systems-engineering products combining goals, tools, memory, policy, and monitoring.

Teams that constrain hard, log everything, and scale only after trust is earned usually get compounding value. Teams that skip controls usually get expensive noise.