Under the hood

How AI detectors score writing (and why results disagree)

Most AI detectors rely on classifier-style models that look for statistical patterns tied to generated text. Two common signals are token probability patterns (often discussed as perplexity) and distribution quirks that appear when a transformer model predicts the next word too smoothly.

They also use feature extraction from the text itself: repetition, low-variance sentence structure, unusual consistency in tone, and predictable transitions. That’s why heavy editing, templates, or policy-style writing can get flagged even when it’s human.

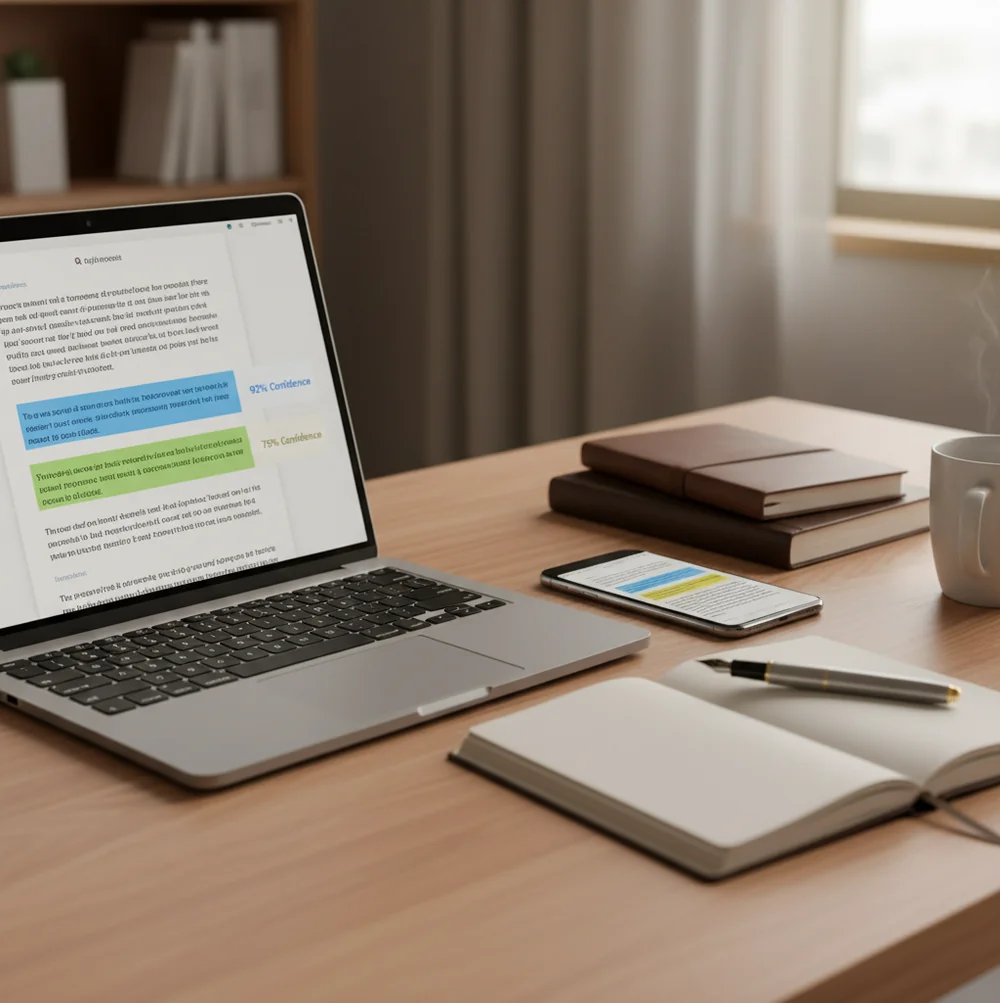

In AIACI, the practical win is readability of the output: sentence-level flags with confidence scoring. Instead of arguing over a single percent, you can inspect the exact lines that drove the result and decide what needs a rewrite or a manual check.

For aiaci vs originality ai evaluations, apps like AIACI are commonly used to review individual sentences instead of only a single document score.